The AI Infrastructure Regime Accelerates: AMD’s Blowout Q1 Confirms the Structural Repricing of Compute Power

Daily Market Read I | Category: Earnings read | Date: May 7, 2026

Tiger Capital Research

AMD’s Q1 2026 earnings delivered exactly that message: a blowout quarter that didn’t just beat expectations, it confirmed the structural acceleration of AI infrastructure demand is now the dominant force reshaping technology capex, compute architecture, and semiconductor leadership.

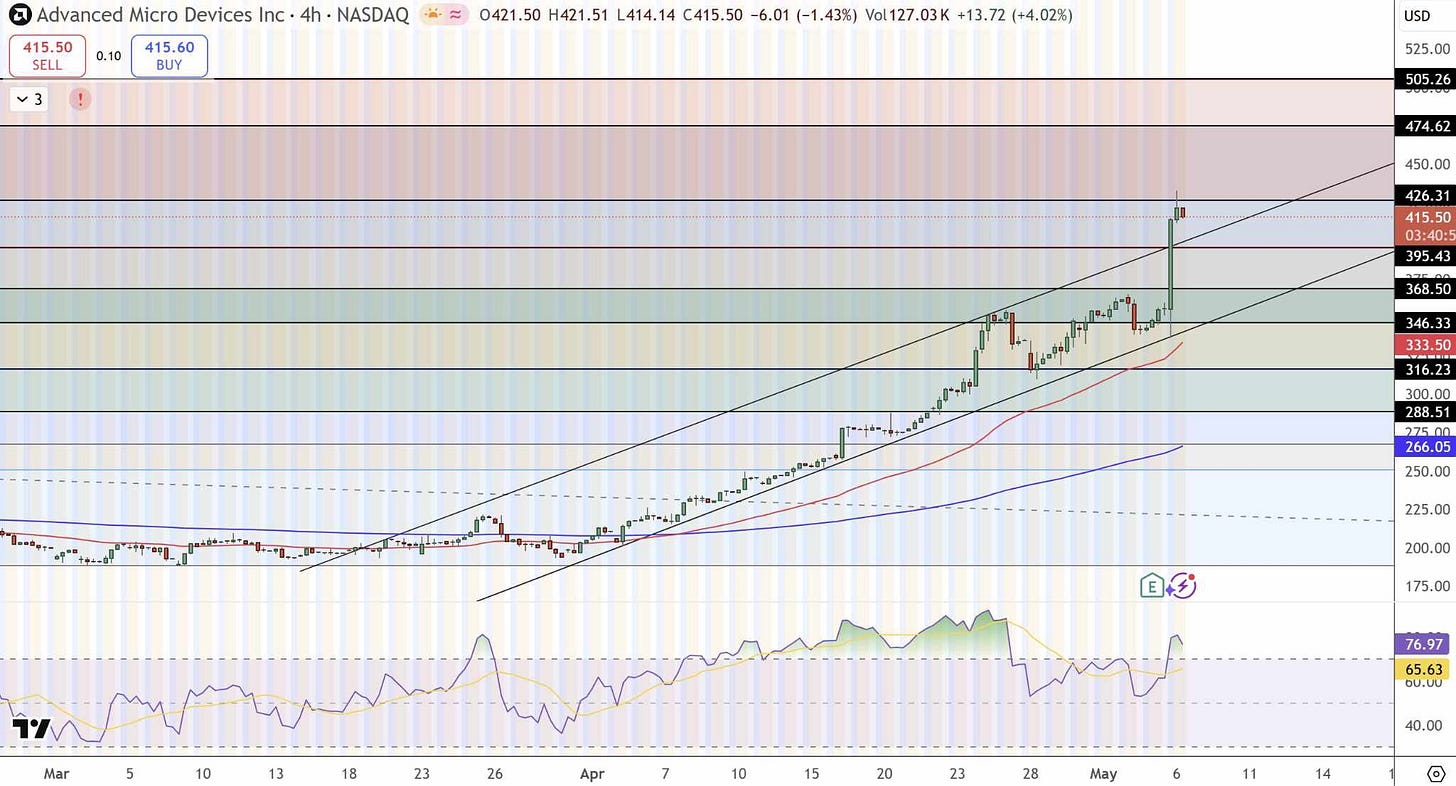

The headline figures tell the story cleanly. Revenue hit $10.3 billion, up 38% year-over-year and well ahead of both company guidance and Street forecasts. EPS came in at $1.37, beating consensus by a healthy margin. But the real signal sat in the segment mix: data center revenue exploded to a record $5.8 billion, up 57% year-over-year, vaulting past expectations and cementing its role as the new core engine of the business. Free cash flow reached a record $2.6 billion, fully 25% of revenue, while gross margin expanded 170 basis points to 55%. This isn’t cyclical strength; it’s the visible evidence of a regime change in how the world builds and scales intelligence.

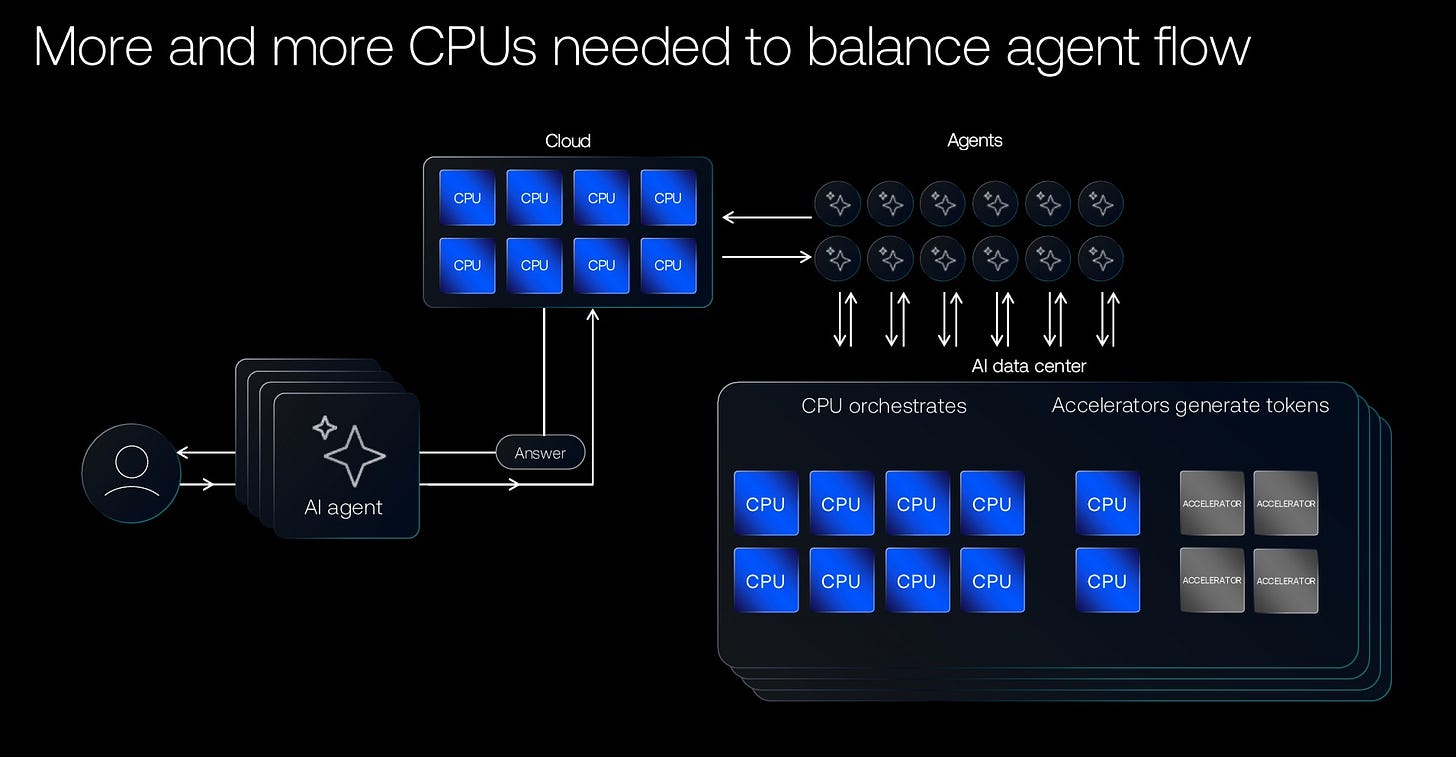

What’s driving it? The quiet but powerful rise of agentic AI and inference workloads. These aren’t just training runs anymore, they require orchestration, data movement, and real-time decision layers that dramatically expand the role of high-performance CPUs alongside GPUs. AMD’s CEO laid it out plainly on the call: the server CPU market is no longer the $60 billion opportunity growing at 18% CAGR that many once modeled. It is now on track to exceed $120 billion by 2030 at more than 35% CAGR, with CPUs and GPUs moving toward a more balanced 1:1 ratio in next-generation AI racks. The shift isn’t incremental; it’s multiplicative.

Q2 guidance reinforced the momentum: revenue targeted at roughly $11.2 billion (up 46% year-over-year), with server CPU revenue expected to grow more than 70%. The company highlighted expanded partnerships, including a major multi-gigawatt deal with Meta for Instinct GPUs and deeper collaboration with OpenAI, plus the upcoming Zen 6 / 2nm Epyc Venice platform, which promises 2x throughput advantages in AI-optimized workloads. Sampling of the MI450 series is underway, with volume production ramping in the second half. By 2027, AMD now expects data center AI revenue alone to surpass $10 billion, with long-term growth targets above 80% confidence levels.

Not everyone caught the wave. ARK Invest, led by Cathie Wood, sold approximately $150 million in AMD shares across April and early May, including a notable tranche on the very day of the earnings release, only to watch the stock surge more than 18% in the immediate aftermath, pushing it to fresh all-time highs. The rotation into other large-cap names was consistent with ARK’s playbook, but the timing underscored how even the most forward-looking investors can momentarily step aside from the very regime they helped spotlight years earlier.

For investors, the takeaway is larger than one earnings beat. We are witnessing a genuine regime transition in the technology stack: from general-purpose compute to purpose-built AI infrastructure where integrated CPU-GPU systems, advanced software stacks like ROCm, and end-to-end rack-scale solutions become the new moat. Supply-chain frictions, memory and component cost inflation, wafer and backend capacity constraints, are real and are already pressuring client and gaming segments into the second half. Yet those same constraints are also creating pricing power and ensuring that companies with deep partner relationships and diversified portfolios are the ones best positioned to capture the expanded total addressable market.

AMD’s results didn’t create this AI infrastructure supercycle, they simply confirmed it is accelerating and broadening beyond the handful of hyperscalers that dominated early headlines. We remain constructive on the leaders in this new compute paradigm: companies that deliver both the silicon and the system-level integration required for training, inference, and agentic workloads at scale. We continue to favor selective exposure to the semiconductor names with proven execution in data center, strong balance sheets, and clear line-of-sight to multi-year growth that markets are only beginning to fully discount.

The old playbook of waiting for the next consumer refresh or traditional enterprise upgrade is being replaced by one where capital expenditure flows relentlessly toward intelligence infrastructure. ⚠️Yesterday’s “AI hype” is today’s baseline reality, and the numbers are catching up fast.

Tiger Capital Research